If the first Nano Banana made Google feel competitive in AI image generation, Nano Banana 2 feels like the version built for real creative work. Officially named Gemini 3.1 Flash Image, it is designed to combine the speed and cost profile of the Flash family with many of the more advanced image-generation traits Google previously reserved for Nano Banana Pro.

That positioning matters. In practice, Google is not selling Nano Banana 2 as a novelty model for quick doodles. It is presenting it as a high-efficiency production model: something fast enough for iterative workflows, but capable enough for marketing mockups, diagrams, localized assets, and image editing that requires stricter instruction following. The API documentation explicitly describes it as optimized for speed and high-volume developer use cases, while Google’s launch post emphasizes world knowledge, reasoning, and rapid edits.

What makes Nano Banana 2 interesting

The standout idea behind Nano Banana 2 is that it is not only a text-to-image model. It is a conversational image model. Google’s docs describe Nano Banana as Gemini’s native image-generation capability, meaning it can generate and edit images through text, images, or both. In the 3.1 Flash Image version, that expands into a more serious toolkit: higher-resolution outputs, search grounding, better multilingual text rendering, and multi-image workflows.

From the official materials, four upgrades stand out:

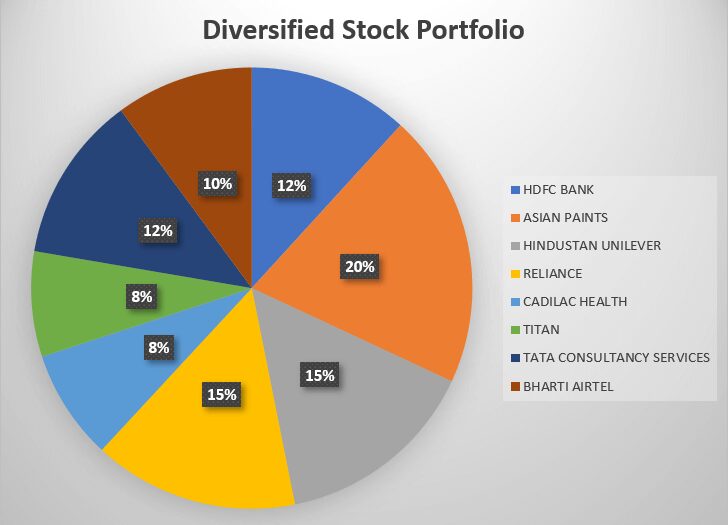

First, search grounding is now deeper. Google says Nano Banana 2 can use Gemini’s real-world knowledge plus real-time web and image search results to render subjects more accurately and generate things like infographics and diagrams. That is a meaningful differentiator versus image models that rely only on static training knowledge.

Second, text in images looks like a priority rather than an afterthought. Google specifically highlights precision text rendering and translation, and the API docs mention improved international text rendering. If you make posters, menus, ads, cards, or social media creatives, this is one of the most commercially relevant capabilities in the release.

Third, resolution and layout control are much stronger. The model supports 512px, 1K, 2K, and 4K outputs, with new tall and ultra-wide aspect ratios such as 1:4, 4:1, 1:8, and 8:1. Google also says users get control from 512px to 4K, which makes the model more useful for both rapid ideation and final-format asset generation.

Fourth, consistency appears to be a major focus. Google’s launch post says Nano Banana 2 can maintain the resemblance of up to five characters and the fidelity of up to 14 objects in a single workflow. Separately, the image-generation docs say Gemini 3.1 Flash Image can mix up to 14 reference images, including up to 10 object images and 4 character images for consistency. That makes it more relevant for storyboarding, catalog composition, brand asset generation, and recurring-character workflows than a typical one-shot image model.

How good is it, based on the public evidence?

The strongest evidence available right now is still Google’s own model card, so it should be read as informative but not fully independent. That said, the numbers are notable. In Google’s published benchmark tables, Gemini 3.1 Flash Image scores above Gemini 2.5 Flash Image and above several named competitors on Overall Preference, Visual Quality, and Infographics (Factuality). In editing benchmarks, it also posts strong results in General Editing, Character Editing, Stylization, and Multi-Input tasks.

The most important caveat is that these are Google-selected benchmarks using Google’s evaluation methodology, not neutral third-party leaderboards. So the safest conclusion is not “Nano Banana 2 is the unquestioned best image model,” but rather: Google has made a credible case that Nano Banana 2 is one of the strongest fast image models now publicly documented, especially for editing, text rendering, and grounded commercial visuals.

External coverage broadly supports that reading. Reuters described the model as leaning on Google’s faster and cheaper Flash stack while improving instruction following and detail sharpness. The Verge highlighted the move to bring more advanced image capabilities to free users, alongside better text generation, localization, and creative control.

Where Nano Banana 2 looks strongest

Based on the official docs, Nano Banana 2 looks especially strong for:

- marketing creatives with embedded text

- localized visual assets

- infographics and diagrams

- multi-reference image editing

- high-volume creative iteration

- storyboard-like workflows with recurring subjects

This is also where the Flash positioning makes sense. If Nano Banana Pro remains the better choice for the most exacting “studio-grade” image work, Nano Banana 2 API appears to be the model more teams will actually use day to day, because it aims for a better balance of quality, speed, and cost. That is also reflected in Google’s own model-selection guidance, which calls Gemini 3.1 Flash Image the go-to image generation model for the best overall intelligence-to-cost-to-latency balance.

Pricing and availability

For developers, the current official Gemini API pricing is $0.067 per 1K image, $0.101 per 2K image, and $0.151 per 4K image on standard pricing, with lower batch pricing available. Google also documents 512px output pricing at $0.045 per image. On the consumer side, Google says Nano Banana image generation is available in all languages and countries where the Gemini app is available, and The Verge reports that Nano Banana 2 is rolling out broadly to free users across Google AI surfaces.

Limitations

Google’s own model card says Nano Banana 2 can still show hallucinations, and may have occasional slowness or timeout issues. That is worth taking seriously, especially for factual infographics or precise branded assets. Its strongest new capability—grounding to search and image results—is powerful, but it also means users should still verify outputs when accuracy matters.

There is also a trust-and-transparency angle. Google says images created with Gemini use SynthID, and Google is pairing provenance work with C2PA Content Credentials. That is a positive sign for enterprise and publishing use, though provenance tooling is still an evolving area across the industry.

Final verdict

Nano Banana 2 looks less like a flashy demo model and more like a practical workhorse. Its most compelling strengths are not just “pretty pictures,” but usable pictures: readable text, multilingual editing, stronger consistency, real-time grounding, and flexible resolution control. That makes it unusually relevant for marketers, designers, content teams, and developers building production workflows.

My review, based on the currently available public material, is this: Nano Banana 2 is probably one of the most important AI image releases of early 2026—not because it is the absolute most artistic model in every scenario, but because it may be the best-balanced image model Google has shipped for real-world speed, editing, and commercial utility. The one thing still missing is more independent long-form testing, but the official documentation and early reporting both point in the same direction: this is a substantial upgrade, not a minor refresh.